Measure What Matters

Business intelligence for leaders who need decision‑ready signals.

Business intelligence for leaders who need decision‑ready signals.

THE VALUE GAP

What Most Teams Can Already See

What You Still Need to Know

You wouldn’t run your whole business on ‘what usually works in other companies’—so why rely on static AI benchmarks instead of seeing how it actually works in your world?

CLOSING THE GAP

We translate AI usage, KPI, and performance data into decision-ready evidence to help you answer:

Capture deployment signals where they actually matter.

Spot shifts in outcomes across teams, offerings, policies, and workflows so you can amplify what’s working and adjust what isn’t.

Translate those signals into decision-ready evidence for your teams.

Surface where AI is creating value now, where it is falling short, and how existing systems can be repurposed across your organization.

Forecast where AI can create the most value in your organization.

Simulate tomorrow's patterns using today's data to steer rollout, governance, and investment toward the highest-value uses.

Comprehensive analytics

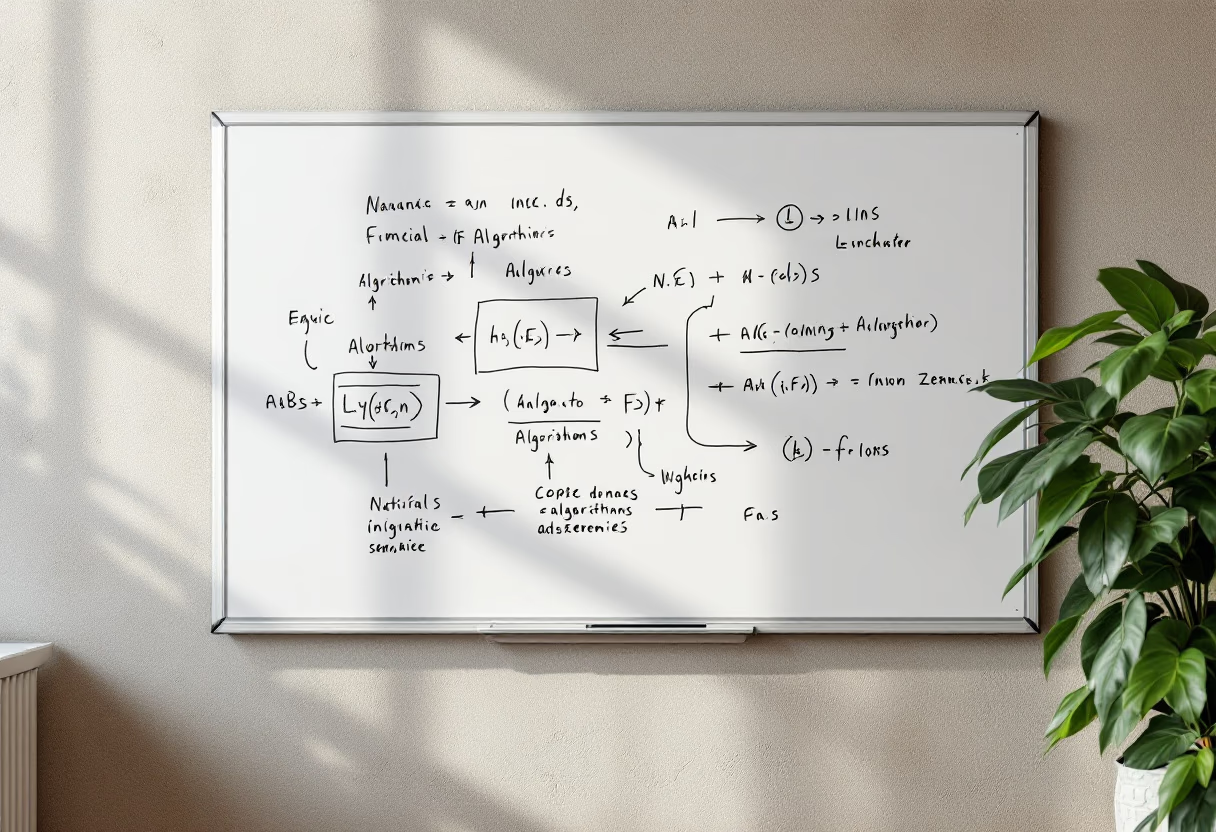

Collaborative team workflows

Custom evaluation criteria

Secure data management

Priority support available

Flexible integrations

A customer-service chatbot looked strong on standard accuracy metrics.

We showed that users were repurposing the chatbot for unsupported tasks/activities.

Then, scorecards showed when, where, and how long users were engaged in unsupported tasks.

Before expanding the chatbot program, we simulated millions of user journeys and interactions, surfacing opportunities to adjust chatbot functionality to more closely align with supported tasks -- forecasting time savings and increased ROI.

HOW WE WORK

We work with your stakeholders to identify the AI challenges that matter most, then design data collection and metrics around your actual contexts, risks, and decisions. The result is a BI layer that maps directly to your existing KPIs—not generic model scores.

Our approach evaluates AI in use, not in the lab—so you understand how systems perform when they meet real staff, customers, and workflows in production.

We generate structured evidence through panel-based testing and flag-based measurement of commercial AI systems. Results show where automation is working—and where oversight or redesign is needed.

This evidence layer powers multiple products and decisions—not just a single analytics output.

RECENT ARXIV PUBlications

◦ CIRCLE: A Framework for Evaluating AI from a Real-World Lens

◦ Reality Check: A New Evaluation Ecosystem Is Necessary to Understand AI's Real World Effects

RECENT SUBSTACK POSTS

◦ Measuring the Cloud: A Lifecycle View of Data Centers

◦ Shifting the AI Evaluation Lens

More from the Civitaas Substack

You wouldn’t run your whole business on ‘what usually works in other companies’—so why rely on static AI benchmarks instead of seeing how it actually works in your world?

Research and analysis on what matters for your AI deployment decisions.

Learn how to encourage open dialogue across your organization to improve AI’s outcomes

Guest Essay: Shifting the AI Evaluation Lens

CIRCLE: A Framework for Evaluating AI from a Real-World Lens

Evaluating Generative AI Through Quasi-Experimental Design

How FRAME Generates Systematic

Evidence to Resolve the Decision-Maker’s Dilemma

Measuring the Cloud: A Lifecycle View of Data Centers

Making AI Evaluation Deployment Relevant Through Context Specification

Comprehensive analytics

Collaborative team workflows

Custom evaluation criteria

Secure data management

Priority support available

Flexible integrations

OFFERINGS

A customer-service chatbot looked strong on standard accuracy metrics. Signal capture showed that users were repurposing the chatbot for unsupported tasks/activities.

Capture deployment signals where they actually matter.

Turn deployment signals into decisions that leaders can stand behind.

Examine scorecards showed when, where, and how long users were engaged in unsupported tasks.

See what happens next before rollout makes it expensive.

Before the chatbot expanded beyond pilot use, Scale simulated millions of user journeys and interactions based on information from Reveal and Examine, surfacing opportunities to adjust chatbot functionality to more closely align with supported tasks -- forecasting time savings and increased ROI.

Civitaas is built around real-world AI evaluation by practitioners who study how systems perform.

Civitaas also directs FRAME (the Forum for Real-World AI Measurement and Evaluation.)

Find Out More About FRAME

Machine learning researcher and AI leader with deep expertise in responsible AI, policy, and innovation.

Measurement scientist and linguist with decades of experience designing evaluations of advanced technology in high-consequence settings.

Comprehensive analytics

Collaborative team workflows

Custom evaluation criteria

Secure data management

Priority support available

Flexible integrations

FRAME is a Virginia State University initiative focused on advancing real-world AI measurement science at the sector level.

Learn More at Frame

Comprehensive analytics

Collaborative team workflows

Custom evaluation criteria

Secure data management

Priority support available

Flexible integrations